Peking University - Georgia Institute of Technology - Emory University

Hi, my name is Shuang Zeng, welcome to my homepage. I received my bachelor's degree in Engineering from Peking University, Beijing, China in 2021. Currently, I am a Biomedical Engineering joint Ph.D. student of Peking University - Georgia Institute of Technology - Emory University. I am very fortunate to be advised by Prof. Qiushi Ren and Assistant Professor Dr. Yanye Lu in MILab from College of Future Technology, PKU and Prof. May Dongmei Wang in Bio-MIBLab from Wallace H. Coulter Department of Biomedical Engineering, Georgia Institute of Technology and Emory University. My research interest mainly focuses on self-supervised contrastive learning, Large Language Models, explainable AI and medical image processing. Welcome to reach out to me for communication and cooperation!

Education

-

Peking UniversityBiomedical Engineering, College of Future Technology

Peking UniversityBiomedical Engineering, College of Future Technology

Ph.D. student in MILabSep. 2021 - present -

Georgia Institute of TechnologyWallace H. Coulter Department of Biomedical Engineering

Georgia Institute of TechnologyWallace H. Coulter Department of Biomedical Engineering

Joint Ph.D. student in Bio-MIBLabJan. 2025 - present -

Peking UniversityBiomedical Engineering, College of Engineering

Peking UniversityBiomedical Engineering, College of Engineering

B.S. studentSep. 2017 - Jul. 2021

Experience

-

ByteDancePlatform Governance, TikTok Shop

ByteDancePlatform Governance, TikTok Shop

Intern (agent development)Mar. 2026 - present

Honors & Awards

-

IEEE BHI 2025 NSF-EMBS-Google Sponsored Young Professional NextGen Scholar Recognition2025.9

-

The First Prize of the 33rd Challenge Cup May Fourth Youth Science Award Competition of Peking University2025.6

-

Award for Science Research of Peking University2024.12

-

The Third Prize of Peking University Scholarship2024.12

-

BMEJ Fellowship Award of Georgia Institute of Technology2024.1

-

Award for Science Research of Peking University2022.12

-

Excellent Graduate of Peking University2021.6

-

Outstanding Undergraduate Scientific Research Program of School of Engineering, Peking University2021.6

-

Leo Kaiyuan Scholarship2020.12

-

Merit Student of Peking University2020.12

-

The Third Prize of Peking University Scholarship2019.12

-

Award for Scientific Research of Peking University2019.12

Academic Service

-

Conference reviewer: CVPR 2026, ECCV 2026, MICCAI 2026

-

Journal reviewer: IEEE TIP, TNNLS, TMI, ToC (Transactions on Cybernetics), JBHI

News

Selected Publications (view all )

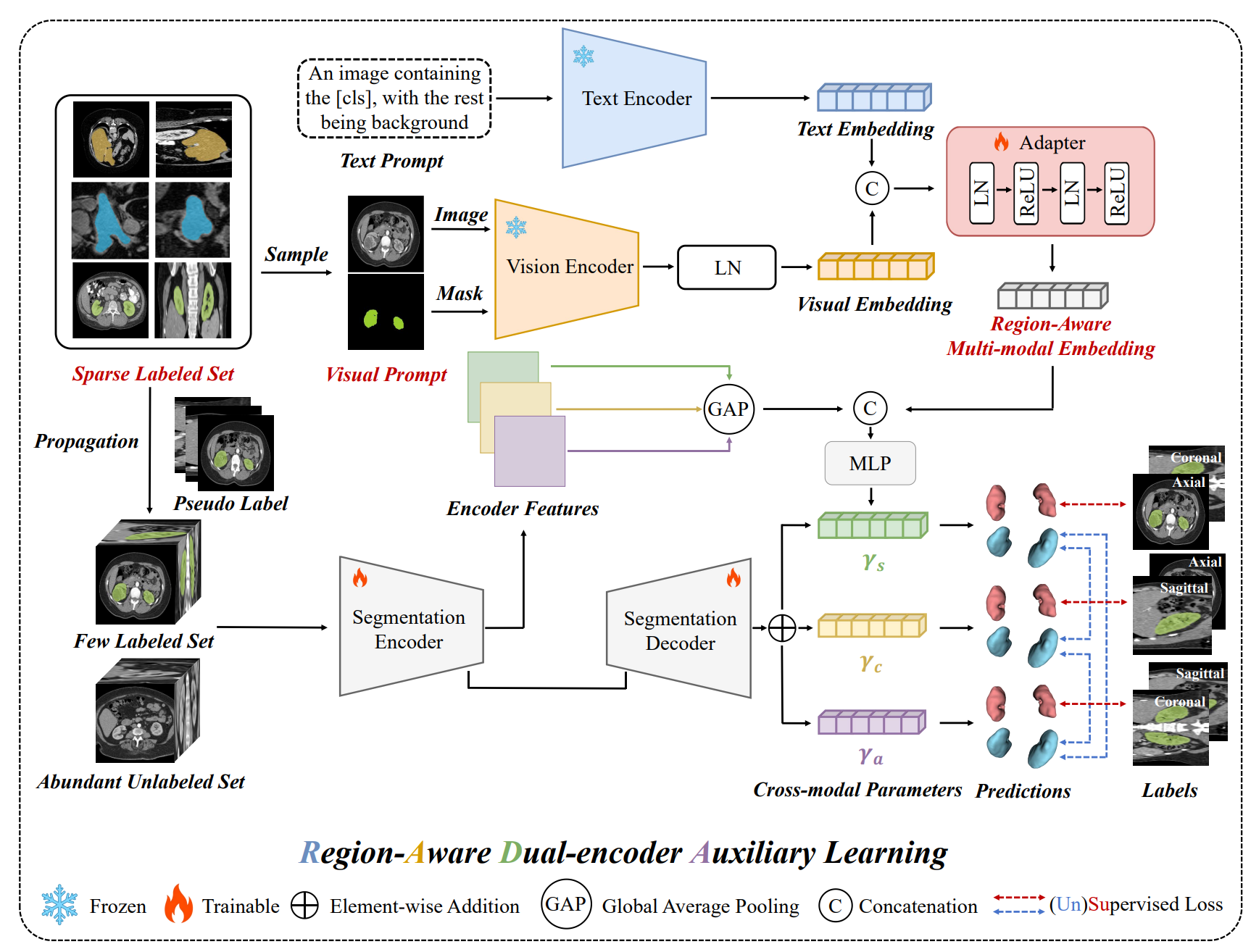

RADA: Region-Aware Dual-encoder Auxiliary Learning for Barely-supervised Medical Image Segmentation

Shuang Zeng, Boxu Xie, Lei Zhu, Xinliang Zhang, Jiakui Hu, Zhengjian Yao, Yuanwei Li, Yuxing Lu, Yanye Lu# (# corresponding author)

arxiv 2026

we propose RADA, a novel Region-Aware Dual-encoder Auxiliary learning pipeline which introduces a dual-encoder framework pre-trained on Alpha-CLIP to extract fine-grained, region-specific visual features from the original images and limited annotations for barely-supervised medical image segmentation.

RADA: Region-Aware Dual-encoder Auxiliary Learning for Barely-supervised Medical Image Segmentation

Shuang Zeng, Boxu Xie, Lei Zhu, Xinliang Zhang, Jiakui Hu, Zhengjian Yao, Yuanwei Li, Yuxing Lu, Yanye Lu# (# corresponding author)

arxiv 2026

we propose RADA, a novel Region-Aware Dual-encoder Auxiliary learning pipeline which introduces a dual-encoder framework pre-trained on Alpha-CLIP to extract fine-grained, region-specific visual features from the original images and limited annotations for barely-supervised medical image segmentation.

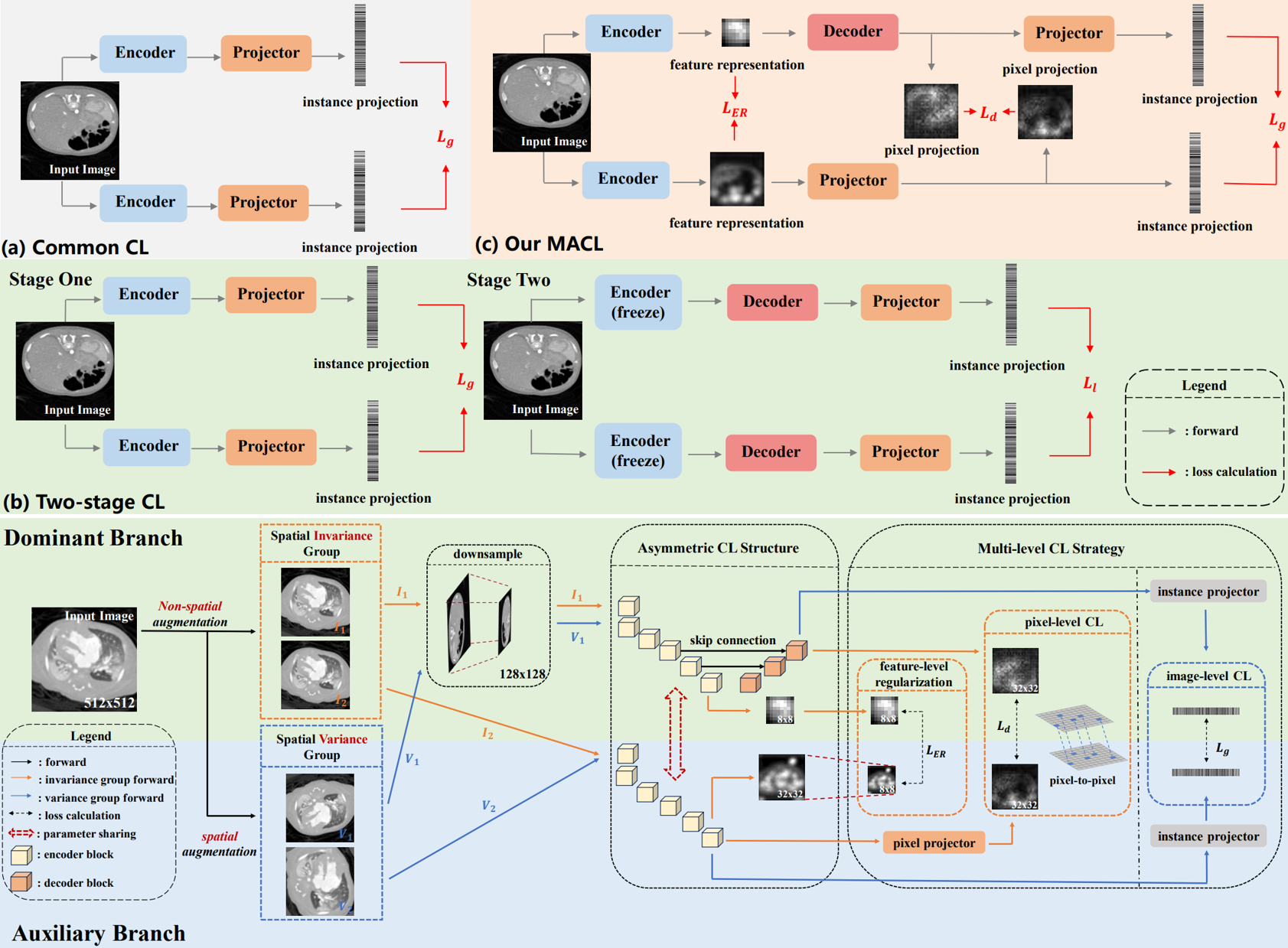

Multi-level Asymmetric Contrastive Learning for Medical Image Segmentation Pre-training

Shuang Zeng, Lei Zhu, Xinliang Zhang, Qian Chen, Hangzhou He, Lujia Jin, Zifeng Tian, Zhaoheng Xie, Micky C Nnamdi, Wenqi Shi, J Ben Tamo, May D. Wang, Yanye Lu# (# corresponding author)

IEEE Journal of Biomedical and Health Informatics 2026 中科院二区Top, IF:6.8

We propose a novel Multi-level Asymmetric Contrastive Learning framework named MACL by introducing an asymmetric CL structure and a multi-level CL strategy to realize one-stage encoder-decoder synchronous pre-training for medical image segmentation.

Multi-level Asymmetric Contrastive Learning for Medical Image Segmentation Pre-training

Shuang Zeng, Lei Zhu, Xinliang Zhang, Qian Chen, Hangzhou He, Lujia Jin, Zifeng Tian, Zhaoheng Xie, Micky C Nnamdi, Wenqi Shi, J Ben Tamo, May D. Wang, Yanye Lu# (# corresponding author)

IEEE Journal of Biomedical and Health Informatics 2026 中科院二区Top, IF:6.8

We propose a novel Multi-level Asymmetric Contrastive Learning framework named MACL by introducing an asymmetric CL structure and a multi-level CL strategy to realize one-stage encoder-decoder synchronous pre-training for medical image segmentation.

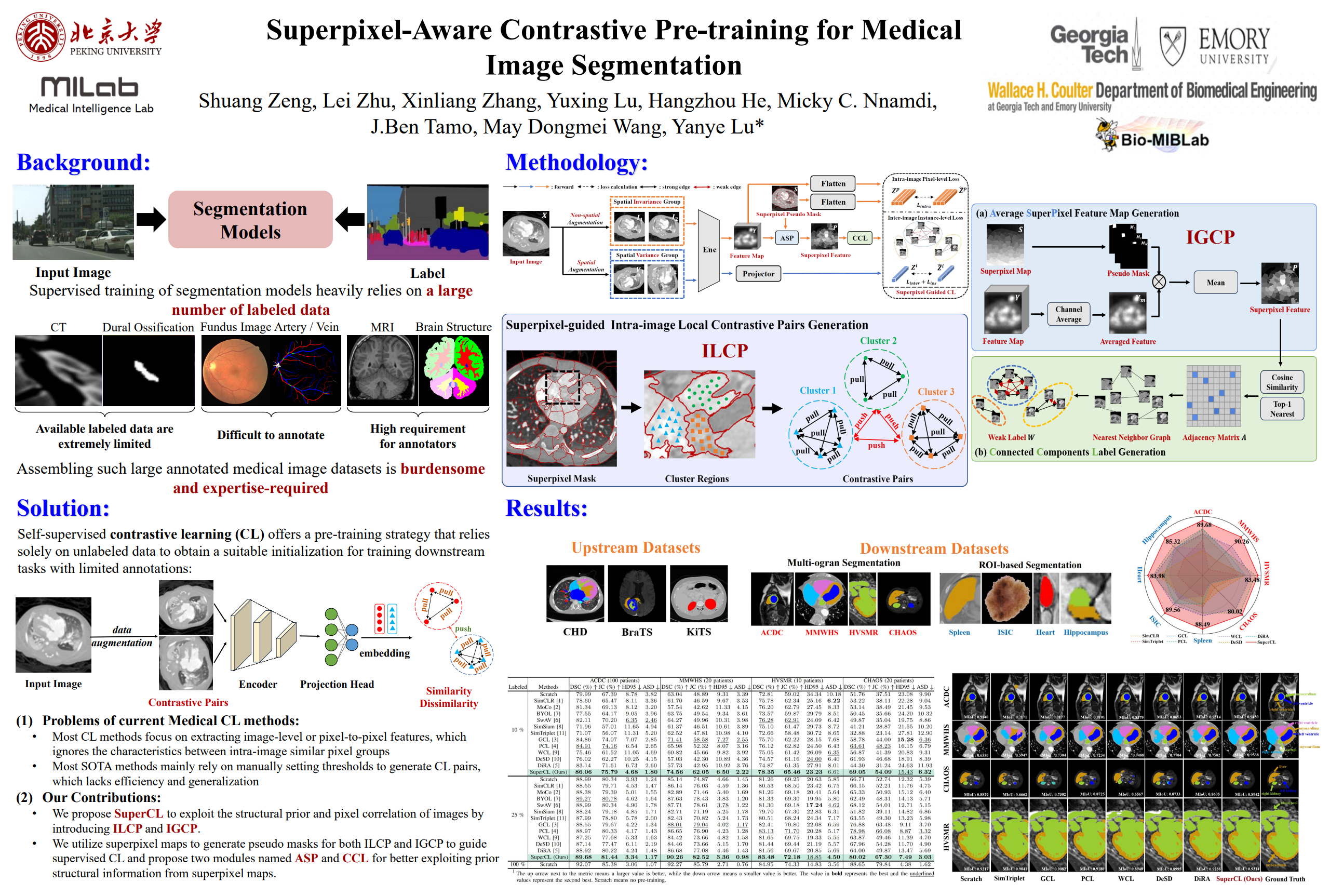

SuperCL: Superpixel Guided Contrastive Learning for Medical Image Segmentation Pre-training

Shuang Zeng, Lei Zhu, Xinliang Zhang, Hangzhou He, Yanye Lu# (# corresponding author)

IEEE Transactions on Image Processing 2026 中科院一区Top, IF:13.7

We propose SuperCL, a superpixel-guided contrastive learning framework for medical image segmentation pre-training, which exploits the structural prior and pixel correlation of images by introducing two novel contrastive pairs generation strategies: Intra-image Local Contrastive Pairs (ILCP) Generation and Inter-image Global Contrastive Pairs (IGCP) Generation.

SuperCL: Superpixel Guided Contrastive Learning for Medical Image Segmentation Pre-training

Shuang Zeng, Lei Zhu, Xinliang Zhang, Hangzhou He, Yanye Lu# (# corresponding author)

IEEE Transactions on Image Processing 2026 中科院一区Top, IF:13.7

We propose SuperCL, a superpixel-guided contrastive learning framework for medical image segmentation pre-training, which exploits the structural prior and pixel correlation of images by introducing two novel contrastive pairs generation strategies: Intra-image Local Contrastive Pairs (ILCP) Generation and Inter-image Global Contrastive Pairs (IGCP) Generation.

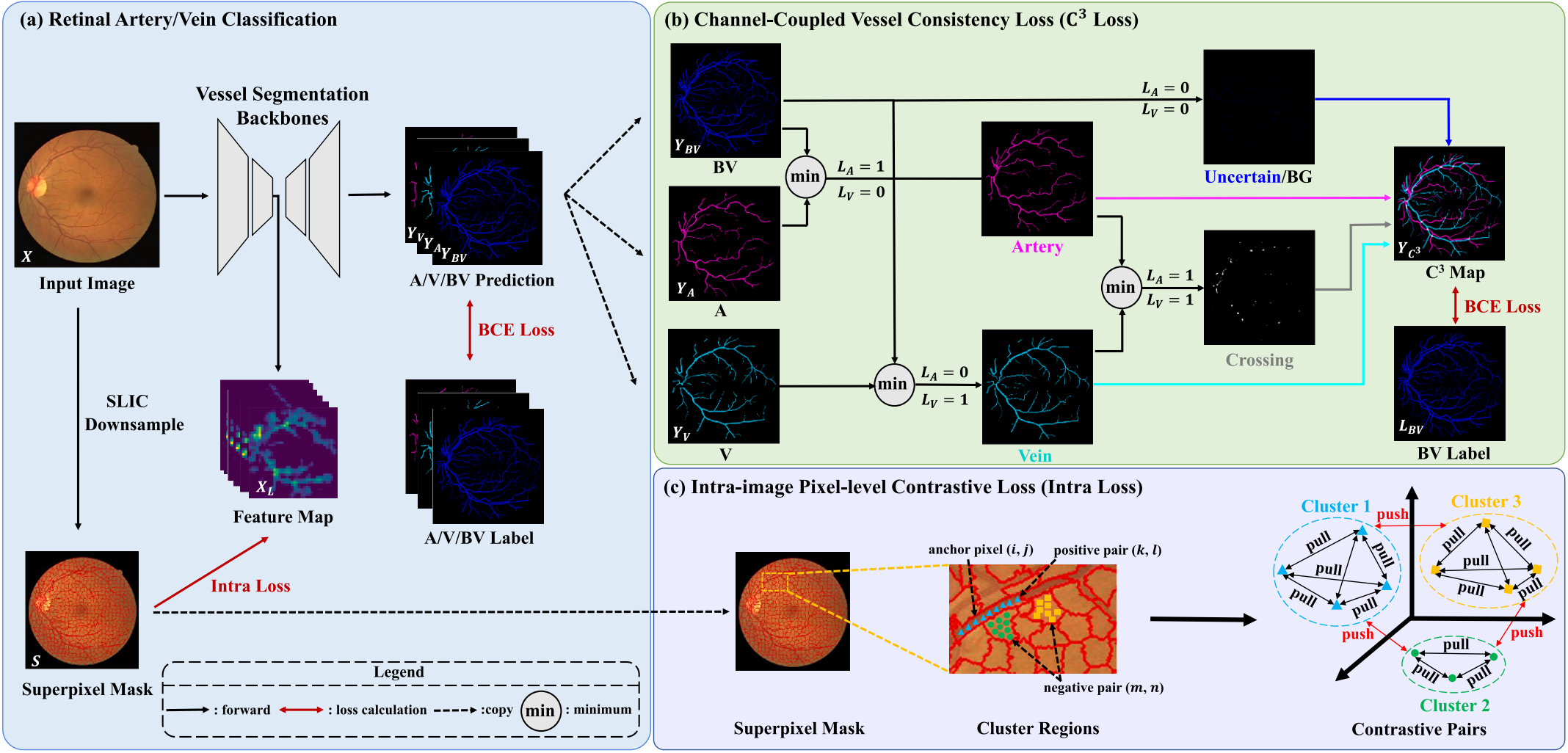

Improve retinal artery/vein classification via channel coupling

Shuang Zeng, Chee Hong Lee, Kaiwen Li, Boxu Xie, Ourui Fu, Hanghzou He, Lei Zhu#, Yanye Lu#, Fangxiao Cheng# (# corresponding author)

Expert Systems With Applications 2025 中科院一区Top, IF:7.5

We design a novel loss named Channel-Coupled Vessel Consistency Loss to enforce the coherence and consistency between vessel, artery and vein predictions and a regularization term named intra-image pixel-level contrastive loss to extract more discriminative feature-level fine-grained representations for accurate retinal A/V classification.

Improve retinal artery/vein classification via channel coupling

Shuang Zeng, Chee Hong Lee, Kaiwen Li, Boxu Xie, Ourui Fu, Hanghzou He, Lei Zhu#, Yanye Lu#, Fangxiao Cheng# (# corresponding author)

Expert Systems With Applications 2025 中科院一区Top, IF:7.5

We design a novel loss named Channel-Coupled Vessel Consistency Loss to enforce the coherence and consistency between vessel, artery and vein predictions and a regularization term named intra-image pixel-level contrastive loss to extract more discriminative feature-level fine-grained representations for accurate retinal A/V classification.

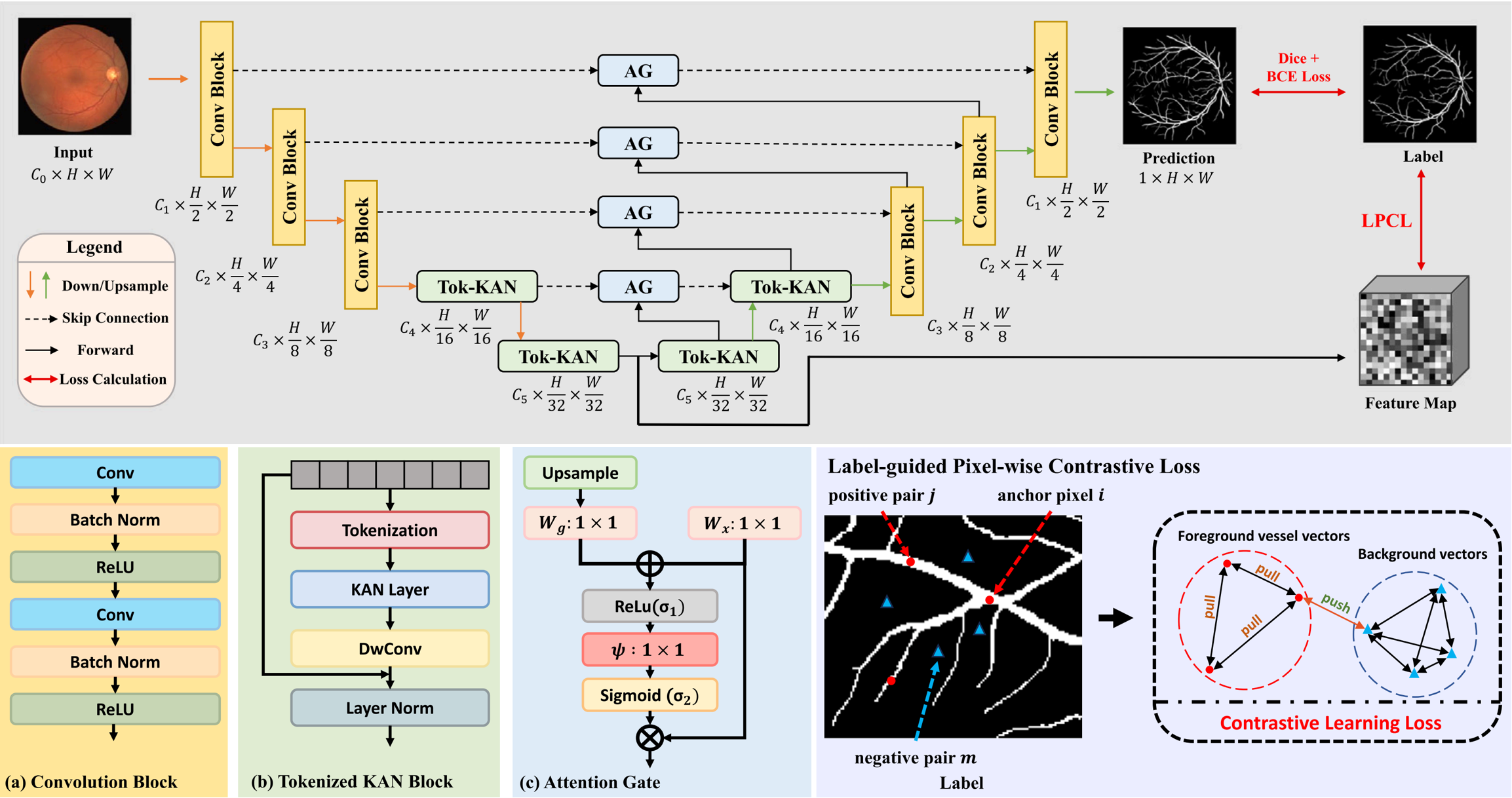

Novel extraction of discriminative fine-grained feature to improve retinal vessel segmentation

Shuang Zeng*, Chee Hong Lee*, Micky C. Nnamdi, Wenqi Shi, J. Ben Tamo, Hangzhou He, Xinliang Zhang, Qian Chen, May D. Wang, Lei Zhu#, Yanye Lu#, Qiushi Ren# (* equal contribution, # corresponding author)

Image and Vision Computing 2025

We propose a new retinal vessel segmentation model named AttUKAN to selectively filter skip connection features and a Label-guided Pixel-wise Contrastive Loss (LPCL) to extract more discriminative features by distinguishing between foreground vessel-pixel sample pairs and background sample pairs.

Novel extraction of discriminative fine-grained feature to improve retinal vessel segmentation

Shuang Zeng*, Chee Hong Lee*, Micky C. Nnamdi, Wenqi Shi, J. Ben Tamo, Hangzhou He, Xinliang Zhang, Qian Chen, May D. Wang, Lei Zhu#, Yanye Lu#, Qiushi Ren# (* equal contribution, # corresponding author)

Image and Vision Computing 2025

We propose a new retinal vessel segmentation model named AttUKAN to selectively filter skip connection features and a Label-guided Pixel-wise Contrastive Loss (LPCL) to extract more discriminative features by distinguishing between foreground vessel-pixel sample pairs and background sample pairs.